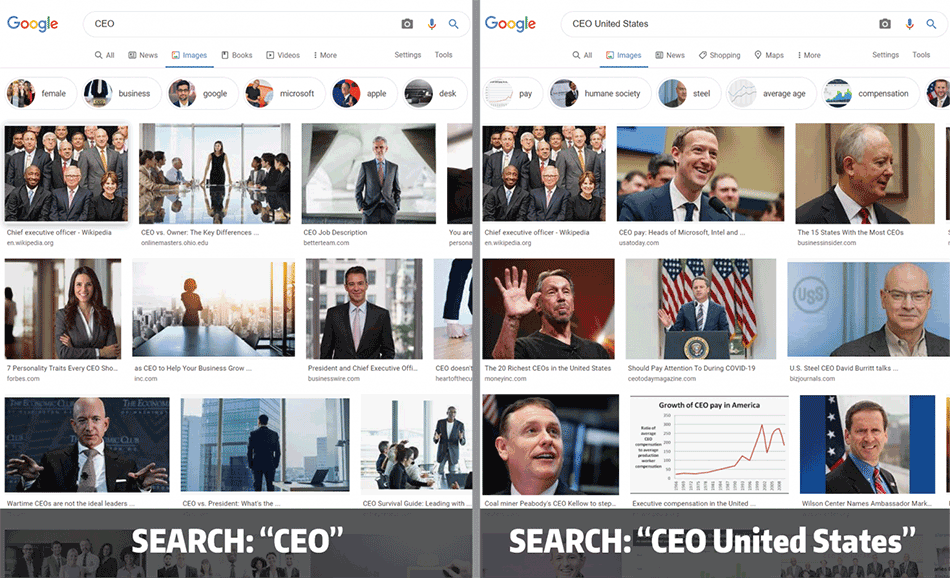

We use Google’s image search to help us understand the world around us. For example, a search about a certain profession, “truck driver” for instance, should yield images that show us a representative smattering of people who drive trucks for a living.

But in 2015, University of Washington researchers found that when searching for a variety of occupations — including “CEO” — women were significantly underrepresented in the image results, and that these results can change searchers’ worldviews. Since then, Google has claimed to have fixed this issue.

A different UW team recently investigated the company’s veracity. The researchers showed that for four major search engines from around the world, including Google, this bias is only partially fixed, according to a paper presented in February at the AAAI Conference of Artificial Intelligence. A search for an occupation, such as “CEO,” yielded results with a ratio of cis-male and cis-female presenting people that matches the current statistics. But when the team added another search term — for example, “CEO + United States” — the image search returned fewer photos of cis-female presenting people. In the paper, the researchers propose three potential solutions to this issue.

“My lab has been working on the issue of bias in search results for a while, and we wondered if this CEO image search bias had only been fixed on the surface,” said senior author Chirag Shah, a UW associate professor in the Information School. “We wanted to be able to show that this is a problem that can be systematically fixed for all search terms, instead of something that has to be fixed with this kind of ‘whack-a-mole’ approach, one problem at a time.”

The team investigated image search results for Google as well as for China’s search engine Baidu, South Korea’s Naver and Russia’s Yandex. The researchers did an image search for 10 common occupations — including CEO, biologist, computer programmer and nurse — both with and without an additional search term, such as “United States.”

“This is a common approach to studying machine learning systems,” said lead author Yunhe Feng, a UW postdoctoral fellow in the iSchool. “Similar to how people do crash tests on cars to make sure they are safe, privacy and security researchers try to challenge computer systems to see how well they hold up. Here, we just changed the search term slightly. We didn’t expect to see such different outputs.”

For each search, the team collected the top 200 images and then used a combination of volunteers and gender detection AI software to identify each face as cis-male or cis-female presenting.

One limitation of this study is that it assumes that gender is a binary, the researchers acknowledged. But that allowed them to compare their findings to data from the U.S. Bureau of Labor Statistics for each occupation.

The researchers were especially curious about how the gender bias ratio changed depending on how many images they looked at.

“We know that people spend most of their time on the first page of the search results because they want to find an answer very quickly,” Feng said. “But maybe if people did scroll past the first page of search results, they would start to see more diversity in the images.”

When the team added “+ United States” to the Google image searches, some occupations had larger gender bias ratios than others. Looking at more images sometimes resolved these biases, but not always.

While the other search engines showed differences for specific occupations, overall the trend remained: The addition of another search term changed the gender ratio.

“This is not just a Google problem,” Shah said. “I don’t want to make it sound like we are playing some kind of favoritism toward other search engines. Baidu, Naver and Yandex are all from different countries with different cultures. This problem seems to be rampant. This is a problem for all of them.”

The team designed three algorithms to systematically address the issue. The first randomly shuffles the results.

“This one tries to shake things up to keep it from being so homogeneous at the top,” Shah said.

The other two algorithms add more strategy to the image-shuffling. One includes the image’s “relevance score,” which search engines assign based on how relevant a result is to the search query. The other requires the search engine to know the statistics bureau data and then the algorithm shuffles the search results so that the top-ranked images follow the real trend.

The researchers tested their algorithms on the image datasets collected from the Google, Baidu, Naver and Yandex searches. For occupations with a large bias ratio — for example, “biologist + United States” or “CEO + United States” — all three algorithms were successful in reducing gender bias in the search results. But for occupations with a smaller bias ratio — for example, “truck driver + United States” — only the algorithm with knowledge of the actual statistics was able to reduce the bias.

Although the team’s algorithms can systematically reduce bias across a variety of occupations, the real goal will be to see these types of reductions show up in searches on Google, Baidu, Naver and Yandex.

“We can explain why and how our algorithms work,” Feng said. “But the AI model behind the search engines is a black box. It may not be the goal of these search engines to present information fairly. They may be more interested in getting their users to engage with the search results.”

For more information, contact Shah at chirags@uw.edu and Feng at yunhe@uw.edu.

This story was originally published by UW News.